Ad Hominem Ad Nauseum

It is common in the field of logic to throw objections to ad hominem attacks to an argument. The objection is so strong that the use of an ad hominem attack in critiquing an argument is considered an informal or material logical fallacy. For those who aren’t quite sure what ‘ad hominem’ means, an ad hominem attack is one in which criticism is leveled at the arguer, typically with respect to some deficiency in makeup or character, rather than at the argument. The academically accepted approach (at least on paper) is to confine any criticism to an argument’s validity independent of who is presenting that argument. Simply put one should be picking on the message and not on the messenger.

But this approach, while it is charitable, is certainly one that can be called into question. Consider the courts of law. The rules of evidence allow, during cross examination, the opposing counsel to call into question the credibility of the witness under the idea that the latter may be “biased”.

The obvious objection to applying this ‘exception’ or ‘violation’ to the ad hominem fallacy is that legal proceedings only flirt with logic but are not based solely on it. The recognition that findings of the law often don’t follow strictly from the laws of logic is well-known and annoying. But is there really no logical rationale for attacking the credibility of the arguer.

Well, the attentive reader can obviously sense that I am going to argue that there are many occasions where attacking the arguer rather than the argument is clearly both a logical and charitable action. The rationale for throwing away the ad hominem fallacy is built on two pillars: 1) the primary one being appeal to authority and 2) the lesser one being sophistry that experts often use.

Let’s explore each of these rationales in turn.

Appeal to authority is, itself, considered to be a fallacy, but anyone who argues that point must be a true occupant of the ivory tower because almost all arguments concerning the physical world involve appeal to authority. For example, how many of us have observed an electron by performing Millikan’s oil drop experiment? Most of us depend on what ‘experts’ have discovered about the electron, including its mass, charge, and spin. How many of us have analyzed DNA and the cell replication process? And yet we believe overwhelmingly in the power of genetics as evidenced by prenatal testing for birth defects and the popularity of 23 and me and similar tests. How many of us have combed through the climate change data to verify anthropogenic global warming? And yet we believe in the Paris Accords are vital for our continued several on planet Earth.

An overwhelming majority of the body of knowledge we claim to be accepted and common rests on the authority of experts. The wikipedia article on the appeal to authority features the following quote:

Clearly Sagan was never an actual practicing scientist or, if he was, he was pretending that science was pure and noble when actual historic evidence shows numerous ignoble events. A small sample of this would include the fraud perpetrated by Jan Hendrik Schon as disclosed in Plastic Fantastic, fraud that took the majority of the condensed matter physics community based solely on his reputation (i.e., authority). It would include the increasing inability of science to reproduce many of the published experiments in so-called peer-reviewed journals as is documented in the very valuable review of the scientific paradigm by William A. Wilson in his article Scientific Regress. Wilson’s opening quote is particularly biting

I can speak to the peer-review process with some authority (see there it is again) as both a submitter and reviewer. Submittals and reviews are done by human beings who are motivated by reputational capital, possible fame and funding, and similar ‘human’ motivations. Therefore the proper thing to do is to always question the motivations of any person who proffers an argument.

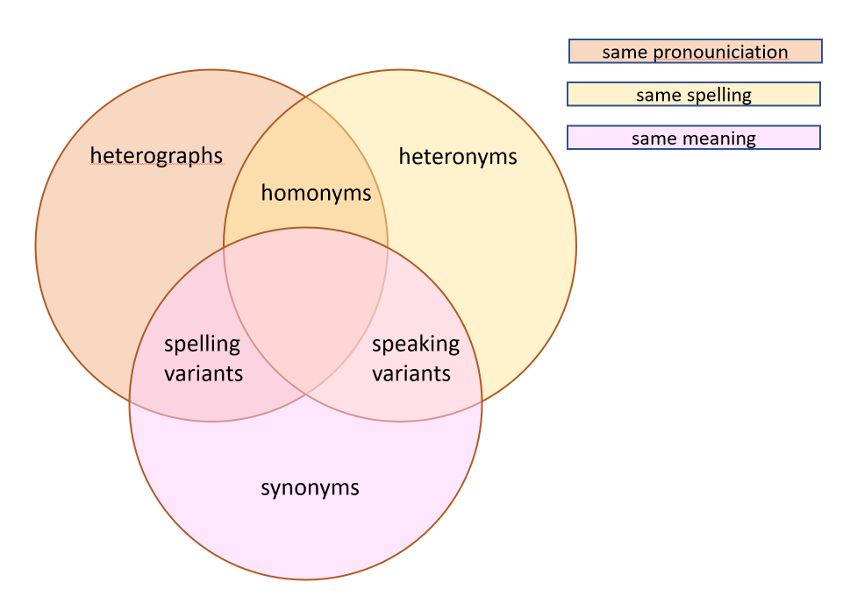

The purist would object to that last sentiment by reminding me that an argument stands on its own merit regardless of the reputation and motivation of the arguer. But this objection applies only to deductive arguments and not even all of these. Deductive arguments form a very small part of ordinary life even though academia thinks otherwise. An additional point is that deductive arguments, at least by modern symbolic standards, has a some appalling properties.

So, a prudent approach to any argument, is to remain skeptical about the arguer.

Now onto sophistry. The word sophist commands little if no respect based on the classic condemnation of Plato, who described these teachers as a practitioner of deception, the description of Aristophanes that characterized them as ‘hairsplitting wordsmiths’. Sophists were known to able to argue and support two contrary positions using ambiguities of language to attain power rather than pursue truth.

Often our current societal dialog centers around some pundit whose arguments are mixes of one part ignorance or opinion and one part sophistry in an attempt to sway us to their positions regardless of truth. Even when these modern liars use deductive logic, the premises usually rest on their authority. So why shouldn’t we come to the table, loaded for bear to ‘shoot the messenger’ with an ad hominem attack just in case he turns out to talk out of both sides of his mouth.